The internet is becoming a widely used tool for conducting research because it allows people to access a significant amount of information affordably, and without geographical boundaries and time limits.

Arts organisations are eager to collect audience data, but it is often the expense and expertise required that prevents them from doing so. Online research tools becoming more widely available. These websites can be highly customised, easy to use, cost-effective and straightforward to set up and manage. Results can be seen immediately and arts organisations are able to gauge immediate responses to last night’s performance or event.

This is an exciting development. It means arts organisations with limited budgets will be able to collect immediate audience data. It can be possible to analyse one variable by another; for example, exploring whether higher levels of satisfaction with an event relate to age, gender, income level or any other variable. Possibilities are opened up for arts organisations to better understand their audience, which, in turn, can help them with programming and marketing.

But, as with every new research or evaluation tool or platform, it is important to understand their limitations. There are two significant limitations to these new online evaluation and research platforms:

1. Relevance and reliability of information

The internet enables us to access a considerable amount of information with reference to a particular topic. But the reliability or relevance of that information can be uncertain, particularly when it comes to analysing qualitative information; that is, the rich, open-ended material that includes what people say and how they say it. Some new research software programs promise to uncover subtle connections from a wide range of sources including interviews, focus groups, surveys and audio. But the reliability of research analysis comes down to the skill of the researcher or evaluator to make those connections. They apply their contextual knowledge, experience and ability to make sense of a wide range of input and apply it to a problem.

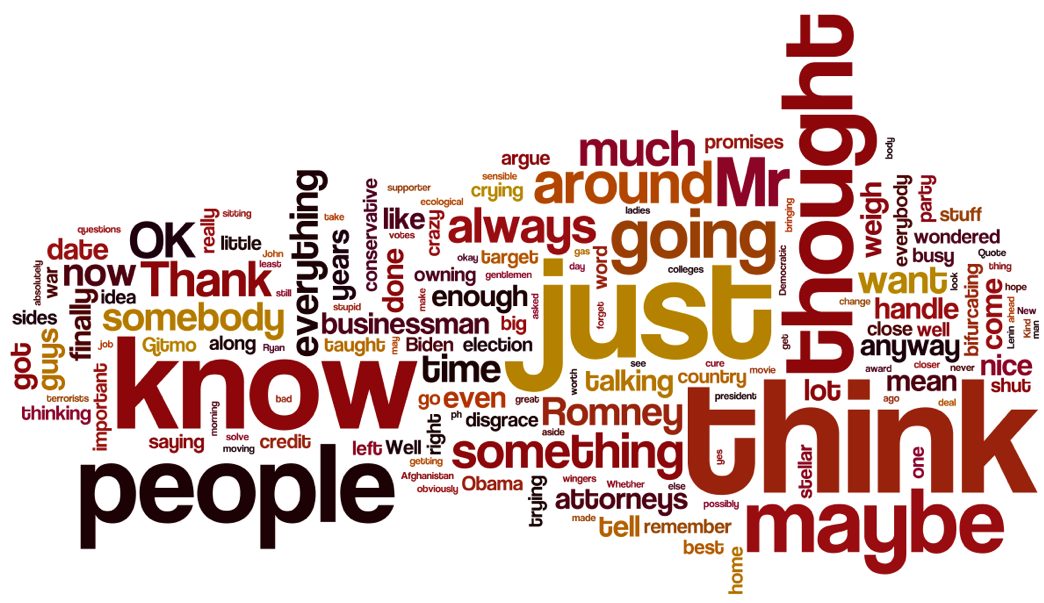

You may be familiar with the word cloud. A word cloud is a visual representation for text data, typically used to depict keywords from a particular text such as a survey. The format is used to quickly perceive the most dominant words with the given text and applying a graphic hierarchy to show the relative prominence. The limitations with word clouds is that they are only the crudest form of textual analysis and are often applied to situations where textual analysis is not appropriate. Additionally, they often highlight the signifiers or intensifiers, rather than the words with the relevant meaning.

2. Evaluation is more than just research

It is one thing to collect data. But data is ‘dead’ until it is analysed, made sense of an had implications drawn from it; that is research. But evaluation is another discipline. An evaluation may be an analysis of how a program or activity has performed according to a stated set of objectives or aims. Or it could be an assessment of the success of an artistic event, performance or activity. But whatever its intention, the most successful evaluation comes when experienced evaluators interact with real people, in real time (perhaps also with online data thrown in the mix). As an organisation or government department, you are not buying a focus group or simply a survey. You are buying expertise. Ideally, you are purchasing the skills of someone trained and experienced to interpret the results. No software program can understand nuances of qualitative research findings. Data is really just the raw material in the research and evaluation process. The real value is added when a trained consultant analyses and interprets the dead data.

Conclusion

It is a positive and exciting development that new online tools are providing a convenient, cost-effective way to access data, particularly for organisations such as those within the arts sector who have not been able to access this sort of information before. If an arts organisation is interested in collating some very simple data to understand who attended a performance and the demographic makeup of those people, for example, online research tools can be useful. But to pull together real meaning from evaluation reports, research data, interviews and discussions requires the interpretative skills of a human. Online tools are a convenient and cost-effective way to access data for those who have yet been able to access this sort of data. But it is another thing to make good sense of this data.